Since the beginning of cloud computing the hybrid cloud is on everyone’s lips. Praised as the universal remedy by vendors, consultants as well as analysts the combination of various cloud deployment models is permanently in the focus during discussions, at panels and conversations with CIOs and IT infrastructure manager. The core questions that needs to be clarified: What are the benefits and do credible hybrid use cases indeed exist, which can be used as best practice guidance notes. This analysis is giving answers to these questions and also describes the ideas behind multi cloud scenarios.

Hybrid Cloud: Driver behind the Public Cloud

Many developers and startups bless the public cloud to escape from high and incalculable upfront costs into infrastructure resources (server, storage, software). Examples like Pinterest or Netflix are showing real use cases and confirm the true benefit. Without the public cloud Pinterest would have never experienced such growth in a short time. Also Netflix benefits from the scalable access to public cloud infrastructure. In the 4th quarter 2014 Netflix has delivered 7.8 billion hours of videos. This is a data traffic of 24,021,900 terabytes of data.

However, what these prime examples are hiding: All of them are green field approaches – like almost every workload that is developed as a native web application on public cloud infrastructure and just represent the tip of the iceberg. However, the reality in the corporate world unveils a completely different truth. Inside the iceberg you find aplenty of legacy applications that are not ready to be operate in the public cloud at the present stage. Furthermore, requirements and scenarios exist for which the use of the public cloud is ineligible. In addition, most of the infrastructure manager and architects know their workloads and its demand very good. Provider should finally accept this and admit that the public cloud in most cases is too expensive for static workloads and other deployment models are more attractive.

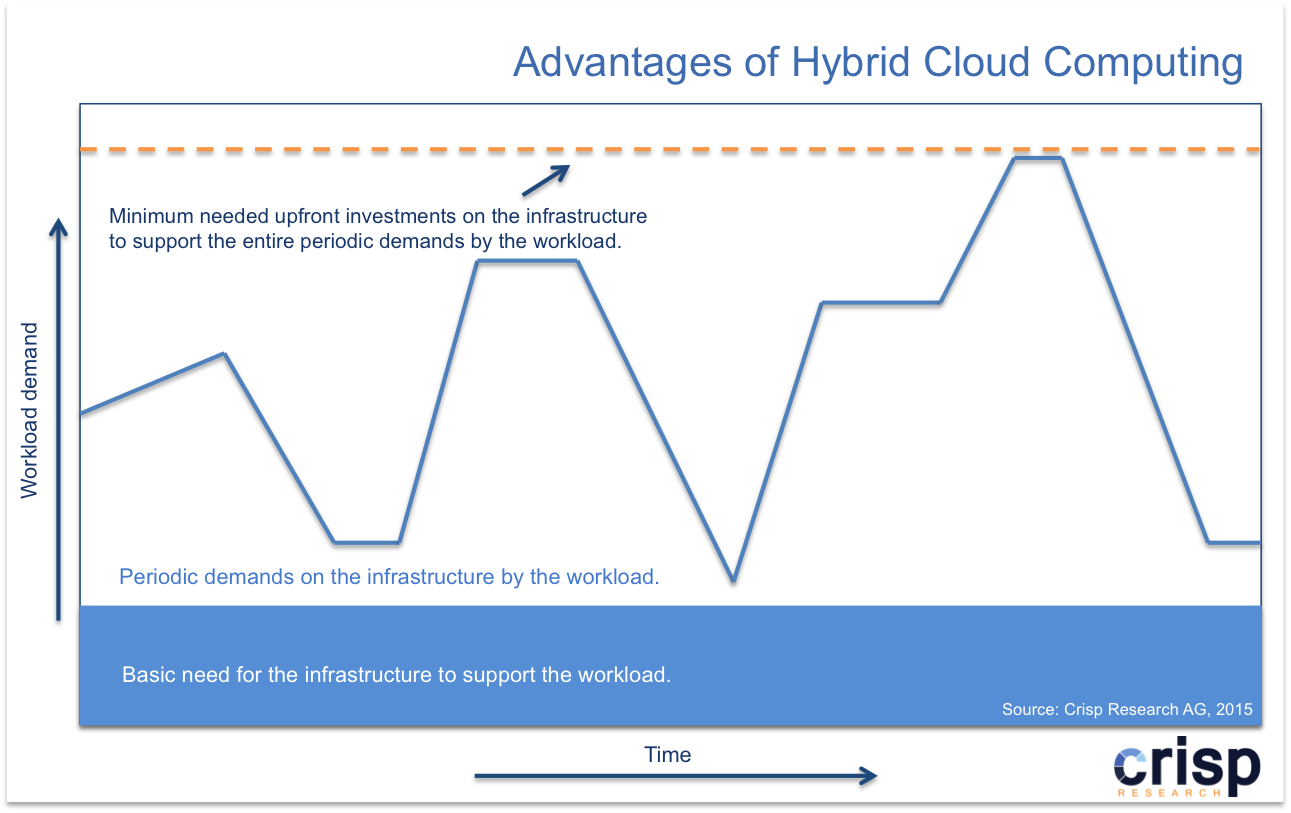

By definition, the hybrid cloud sphere of activity is limited to connect a private cloud with the resources of a public cloud. In this case, a company is running an own cloud infrastructure and uses the scalability of a public cloud provider to get further resources like compute, storage or other services on demand. With the rise of further cloud deployment models other hybrid cloud scenarios have been developed that include hosted private and managed private cloud. In particular, for most static workloads – these where the requirements of the infrastructure on average are known – an external static hosted infrastructure fits very well. Variations because of marketing campaigns or the Christmas season – that are occurring periodically – can be compensated by dynamically add further resources from a public cloud.

This approach can be mapped to many other scenarios. In this case, not only pure infrastructure resources like virtual machines, storage or databases must be in the foreground. Even the hybrid use of value added services from the public cloud providers within self-developed applications should be considered, to use a ready function instead of developing it on the own again or benefit from external innovations immediately. With this approach the public cloud offers companies a real value without outsourcing the whole IT environment.

Real hybrid cloud use cases can be find at Microsoft, Rackspace, VMware and Pironet NDH:

- Microsoft Azure + Lufthansa Systems

For expanding the internal private cloud and the worldwide datacenter capacities Lufthansa sets on Microsoft Azure. One of the first hybrid cloud scenarios was a disaster recovery concept whereby Microsoft SQL Server databases are mirrored to Microsoft Azure in a Microsoft datacenter. In case of an error within the Lufthansa environment the databases are operated in a Microsoft datacenter without interruption. Furthermore, the own infrastructure resources are extended by Microsoft’s worldwide datacenters to deliver customers a consistent service offering without building own infrastructure resources globally. - Rackspace + CERN

As part of its OpenLap partnership the CERN is using a public cloud infrastructure from Rackspace to get compute resources on demand. This happens typically if physicist needs more compute, as the local OpenStack infrastructure is able to deliver. CERN is experiencing this regularly during scientific conferences when the last data of the LHC and its experiments are being analyzed. Applications with a small I/O rate are well suited to be outsourced to Rackspace’s public cloud infrastructure. - Pironet NDH + Malteser

As part of the “Smart.IT” project Malteser Deutschland sets on a hybrid cloud approach. At this, applications in the own datacenter are combined with communication services like Microsoft Office 365, SharePoint, Lync and Exchange from a public cloud. Applications that are critical in terms of data-protection law – like electronic patient record – are being used from a private cloud in a Pironet datacenter. - VMware + Colt + Sega Europe

Since the beginning of 2012 gaming manufacturer Sega Europe sets on a hybrid cloud to give external testers access to new games. Previously this was realized via a VPN connection into the company’s own network. Meanwhile Sega is running an own private cloud to provide development and test systems for internal projects. This private cloud is directly connected with a VMware based infrastructure in a Colt datacenter. Thus, on the one hand Sega can get further resources in order to compensate peak loads from a public cloud. On the other hand the game testers get a special testing area over it. Thus, the testers don’t have to access the Sega corporate network anymore but testing on servers within the public cloud. When the tests are finished the no more needed servers are shutting down by Sega IT without the intervention of Colt.

Multi Cloud: Automotive Industry as the role model

In the course of the continuously propagation of the hybrid cloud also multi cloud scenarios are moving in the focus. For a better understanding of the multi cloud, it helps to consider the supply chain model of the automotive industry as an example. The automaker sets on various (sometimes redundant) suppliers, which provide him with single components, assemblies or ready systems. In the end the automaker assembles the just in time delivered parts within the own assembly factory.

The multi cloud respectively the hybrid cloud are adopting the idea from the automotive industry by working together with more than one cloud provider (cloud supplier) and integrating everything with the own cloud application respectively the own cloud infrastructure in the end.

As part of the cloud supply chain three delivery tiers exist that can be used to develop an own cloud application or to build an own cloud infrastructure:

- Micro Service: Micro Services are granular services like Microsoft Azure DocumentDB and Microsoft Azure Scheduler or Amazon Route 53 and Amazon SQS that can be used to develop an own cloud native application. Micro Services can also be integrated as part of an existing application, which is running on an own infrastructure and thus is extended by the function of the Micro Service.

- Module: A Module encapsulates a scenario for a specific use case and thus provides a ready usable part for an application. To these belong e.g. Microsoft Azure Learning Machine and Microsoft Azure IoT. Modules can be used like Micro Services for development purposes respectively for the integration into applications. However, compared to Micro Services they are providing a greater functionality.

- Complete System: A Complete System is about a SaaS service, thus an entire application that can directly be used within the company. However, it still needs to be integrated with other existing systems.

In a multi cloud model an enterprise cloud infrastructure respectively a cloud application can fall back on more than one cloud supplier and thus integrate various Micro Services, Modules and Complete Systems of different providers. For this model a company develops most of the infrastructure/ application on its own and extends the architecture with additional external services whose effort would be much too big to redevelop it on its own.

However, this leads to higher costs at cloud management level (supplier management) as well as at integration level. Solutions like SixSq Slipstream or Flexiant Concerto are specialized on multi cloud management and support during the usage and management of cloud infrastructure across providers. On the contrary Elastic.io works on several cloud layers, across various providers and supports as a central connector to make cloud integration easier.

The cloud supply chain is an important part of the Digital Infrastructure Fabric (DIF) and should be considered in any case to benefit from the variety of different cloud infrastructure, platforms and applications. The only disadvantage is that the value added services (Micro Services, Modules) named above are still only available in the portfolios of Amazon Web Services and Microsoft Azure. In the course of the rapid development of use cases for the Internet of Things (IoT), IoT platforms and mobile backend infrastructure are taking an ever-growing significance. Ready solutions (Cloud Modules) are helping potential customers to reduce the development effort and giving impulses for new ideas.

Infrastructure providers whose portfolios still focus on pure infrastructure resources like servers (virtual machines, bare metal), storage and some databases will disappear from the screen in the midterm. Only the ones who enhance their infrastructure with enablement services for web applications, mobile and IoT applications will remain competitive.